As Kubernetes is growing in popularity, the entry threshold draws ever lower. Sadly, security issues are still present, however. This article discusses two Open Source tools for auditing cluster security by renowned experts in this field, Aqua Security.

kube-bench

kube-bench is a Go application that checks whether a Kubernetes cluster meets the CIS Kubernetes Benchmark guidelines. The project boasts a rich history (it was started on GitHub back in 2017) and has many loyal followers (as its 4500 stars can attest).

So what is the CIS (Center for Internet Security)? It’s a nonprofit organization that addresses cybersecurity issues using feedback from the community. The CIS Benchmark, in turn, is a list of recommendations for setting up an environment protected against cyber attacks. These recommendations include looking for overly permissive config file privileges and potentially hazardous cluster components settings, identifying unprotected accounts, and checking general and network policies.

Kube-bench supports Kubernetes versions 1.15 and up and is also compatible with the GKE, EKS, and OCP (versions 3.10 and 4.1) platforms.

Let’s take a look at kube-bench in action in the Kubernetes cluster (v1.19 will be used).

Installing and configuring kube-bench

The easiest way to run kube-bench is to download a ready-made executable and configure it using command-line arguments:

curl -L https://github.com/aquasecurity/kube-bench/releases/download/v0.6.3/kube-bench_0.6.3_linux_amd64.tar.gz -o kube-bench_0.6.3_linux_amd64.tar.gz && \

tar -xvf kube-bench_0.6.3_linux_amd64.tar.gz && \

./kube-bench --config-dir `pwd`/cfg --config `pwd`/cfg/config.yaml

You can also run Kube-bench in a Docker container:

docker run --rm --pid=host -v /etc:/etc:ro /var/lib/etcd:/var/lib/etcd:ro -v /var/lib/kubelet/config.yaml:/var/lib/kubelet/config.yaml:ro -v $(which kubectl):/usr/local/mount-from-host/bin/kubectl -v $HOME/.kube:/.kube -e KUBECONFIG=/.kube/config -it aquasec/kube-bench:latest run

Furthermore, there is also a ready-made manifest for running kube-bench in a cluster. In this case, two instances of kube-bench (each with its own set of input parameters) must be run to render the testing valid: one on the master node and another on the worker node. However, the manifest provided by the developers only performs a small subset of the available tests.

The tool works out of the box, so there is no need to configure it. You can disable the unnecessary tests or add your own by modifying configuration files in the ./cfg/ directory. In our case, according to the documentation, the CIS 1.20 configuration was used when we ran tests in the Kubernetes 1.19 cluster.

Results and recommendations

The report is divided into five thematic blocks with self-explanatory titles:

- Master Node Security Configuration;

- Etcd Node Configuration;

- Control Plane Configuration;

- Worker Node Security Configuration;

- Kubernetes Policies.

The output of the program is pretty informative and there’s an explanation for each type of test. The only thing that is not obvious in this output is the Automated/Manual marks next to each test. Unfortunately, there is no simple explanation here. The documentation contains the following note:

[..] If the test is Manual, this always generates WARN (because the user has to run it manually) [..]

However, it does not shed any additional clarity on that, as the screenshot below clearly shows that Manual-type tests run normally.

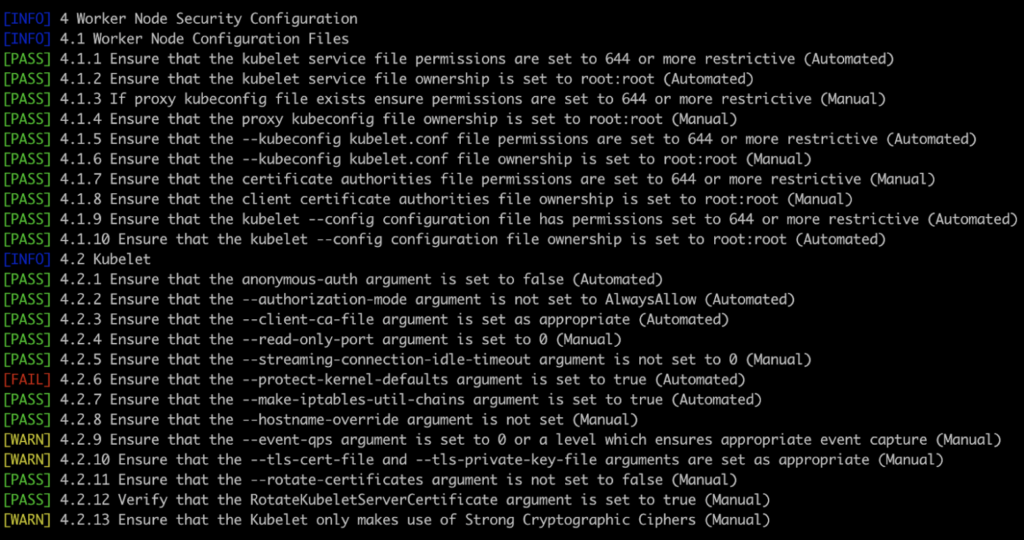

For instance, here’s the output of the Worker Node Security Configuration:

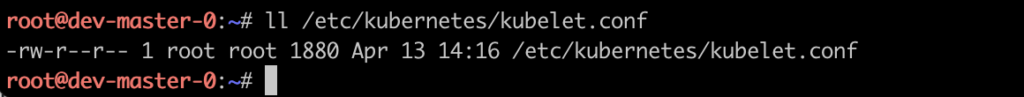

As you can see, there were 23 tests performed. Let’s examine points 4.1.5 and 4.1.6 in more detail using the corresponding configuration file. According to that, kube-bench verified the kubelet.conf permissions and found that they were correct and that the file ownership was set to root. Let’s check them manually and confirm that kube-bench is correct:

It’s also worth noting that the tool doesn’t work correctly with symlinks and may return false-negative results.

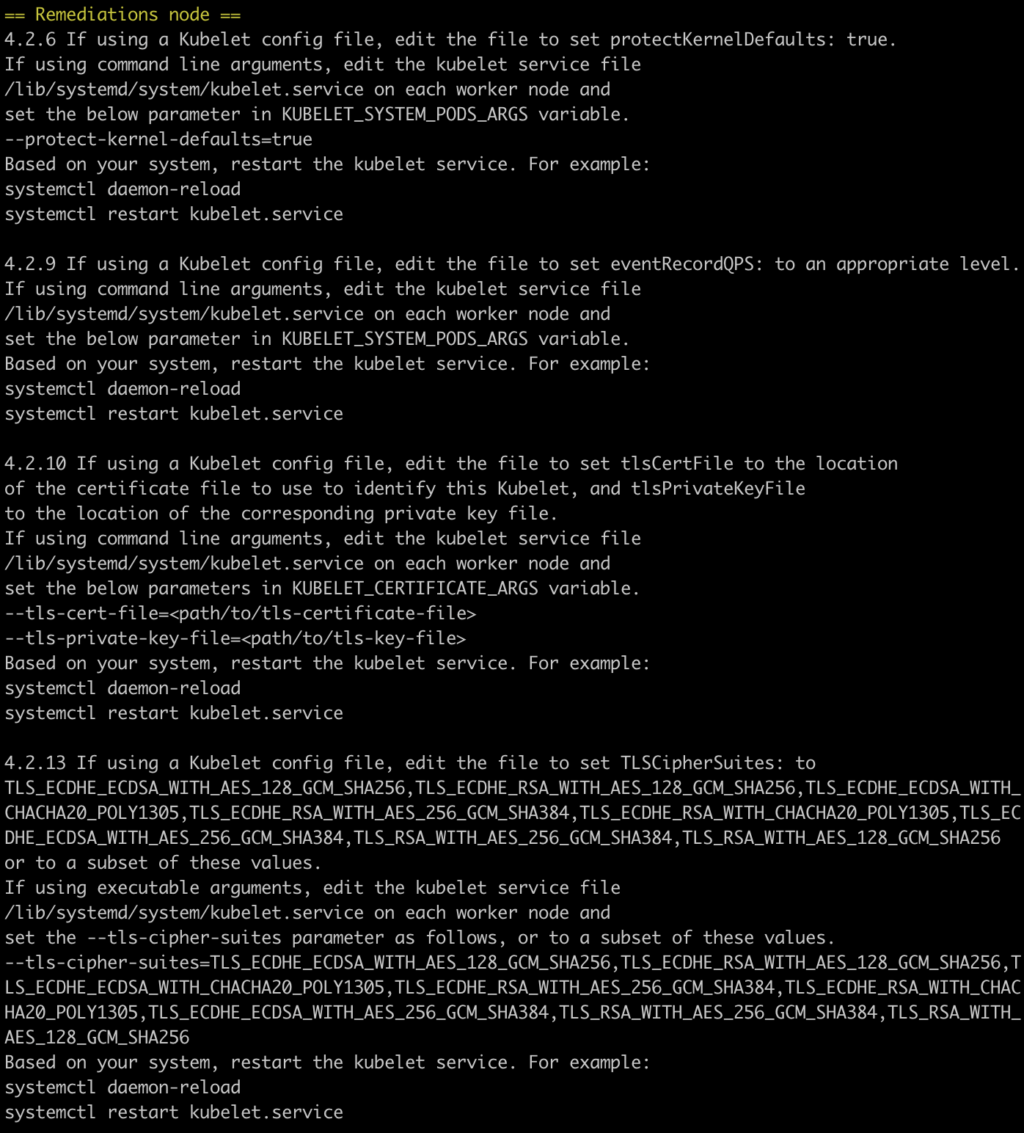

However, it is the recommendations for fixing a particular problem that make kube-bench such a great tool.

An action plan is suggested for all the tests that ended up with a [FAIL] or [WARN].

For instance, for 4.2.6, kube-bench suggests passing the --protect-kernel-defaults=true flag to the kubelet when starting. However, you should keep in mind that once this flag is activated, the kubelet will no longer be able to make changes to sysctl. This will result in it becoming dysfunctional until you manually correct the inconsistencies.

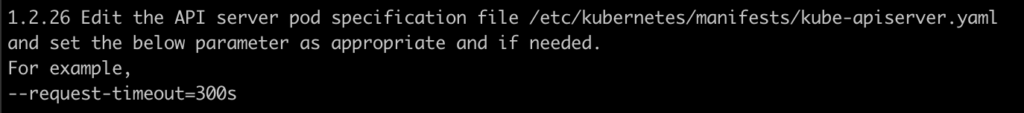

Here is another example:

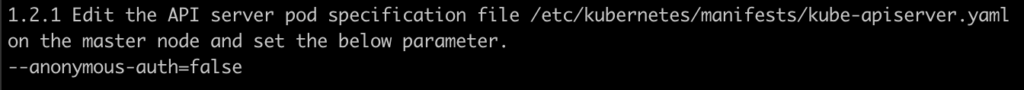

Kube-bench suggests disabling anonymous access to kube-apiserver, but this won’t allow the liveness and readiness probes to work on the apiserver Pod. As a result, it will somewhat periodically fail.

One more example is that kube-bench recommends specifying --kubelet-certificate-authority. However, this also renders some of the features unusable…

It is further worth noting that some tests didn’t go correctly for some reason. For example, this test Failed:

However, if you look at the configuration, the desired value is there:

Recap

As a provisional conclusion regarding kube-bench, I would like to laud its easy and flexible operation, its extensive support and periodic updates, its wide range of checks, and clear recommendations on what to do to make your cluster secure. However, I would like to reiterate the dangers of mindlessly following the recommendations.

It’s also not entirely clear what to do with the recommendations. On the one hand, you can break the cluster with the suggested commands. On the other hand, ignoring them generates frustration and fear for the safety of the cluster.

kube-hunter

kube-hunter is a Python tool designed to discover vulnerabilities in a Kubernetes cluster. It’s different from the previous utility as it assesses the cluster protection from the point of view of the ‘attacker’. It also features quite a rich history: it has been in development since 2018 and has 3500+ stars on GitHub.

We’re gonna run and review it on the same Kubernetes 1.19 cluster.

Installation

The installation boils down to running a simple command:

pip3 install kube-hunter

You can also run it with Docker:

docker run -it --rm --network host aquasec/kube-hunterSelecting scan mode

The user is offered a choice of 3 scanning options:

- Remote scanning — checking a specific IP address or DNS name. kube-hunter attempts to find vulnerabilities in a cluster at some address;

- Interface scanning — as the name suggests, kube-hunter does some interface scanning. The functionality is the same as in the first option. However, it searches for vulnerabilities on all local interfaces;

- Network scanning — the same type of search but with the CIDR specified.

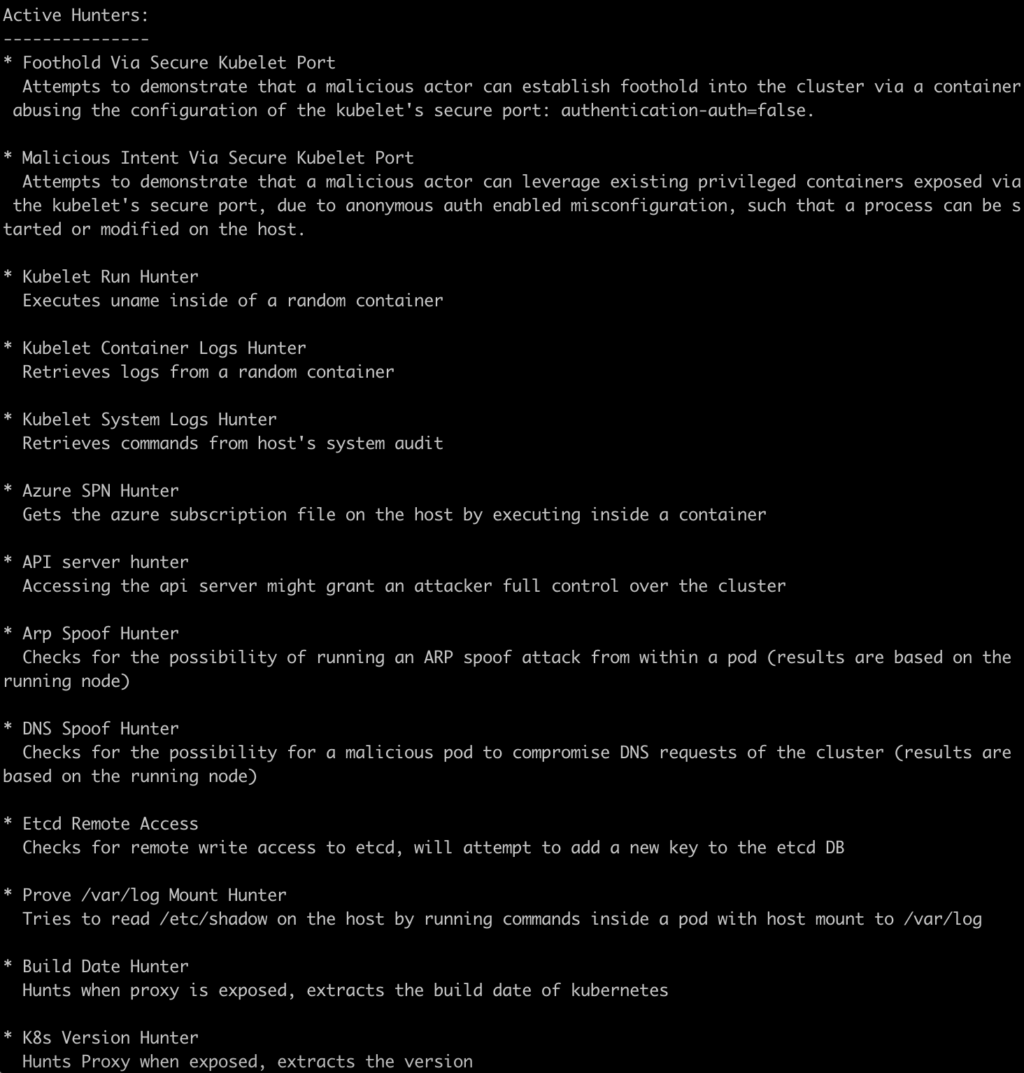

Active Hunting mode, in which the application uses the discovered vulnerabilities to make changes to the cluster, deserves special mention. kube-hunter, for example, tries to write something to etcd, execute ‘uname -a’ in a random Pod, or read /etc/shadow from the Pod where /var/log is mounted. Below is a complete list of the “active hunters” included in the utility:

Running

To get a better feel for the tool, let’s run a passive scan straight from the master node: first via the public address and then via the local one.

The Remote scanning mode can be started using the following command: kube-hunter --remote <address>. In this mode, the tool scans one target IP address. As you can see below, none of the cluster components are accessible from outside:

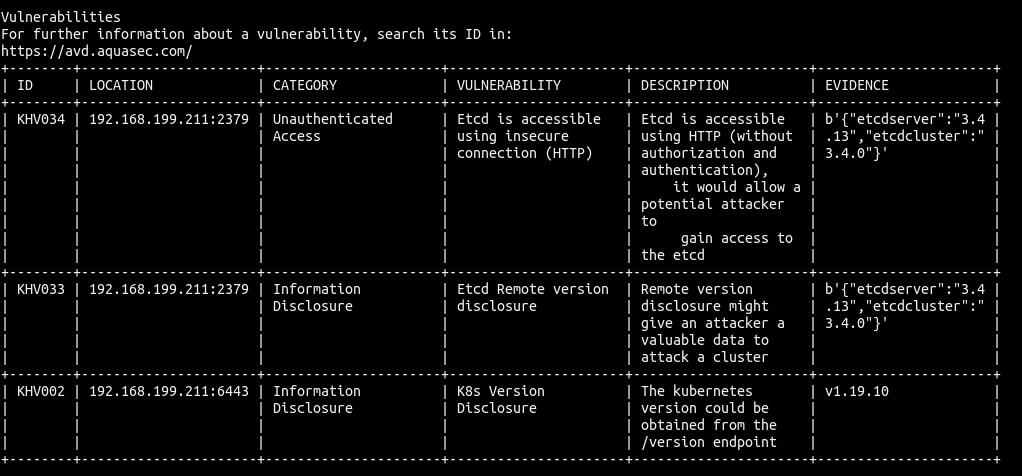

The situation is different when scanning the local interface: kube-hunter discovered the API Server, etcd, Kubelet API, and even managed to detect the Kubernetes version in the cluster (non-critical vulnerability).

Simulating a vulnerability

To demonstrate how kube-hunter seeks out vulnerabilities, I will enable an HTTP connection for etcd by changing its configuration in /etc/kubernetes/manifests/etcd.yaml. kube-hunter immediately detected an unsecured etcd endpoint and warned that a potential attacker could exploit the vulnerability to access etcd.

Recap

kube-hunter is a viable tool for pentesting a Kubernetes cluster. It’s easy to install and run. In addition, it gives you an idea of what your cluster looks like through the eyes of an attacker.

However, as for the command’s output, I couldn’t find any tips on fixing the discovered vulnerabilities. For instance, kube-hunter might say (and quite reasonably!) that the API Server is not patched for CVE-2019-11247, but what do you do about that?

In addition, the tool is very aggressive in scanning networks and ISPs’ netscan detectors can react to that.

Conclusion

Both utilities appear pretty mature and convenient to work with and provide a good overview of cluster security. Despite having some flaws, they are a good starting point for those concerned about cluster security.

Although the CIS Kubernetes Benchmark provides an impressive list of infrastructure checking options, you should consider them as no more than a first step. Keep in mind that security is not limited to the cluster settings. It’s just as important to keep the applications running in the containers up-to-date, prevent unauthorized access to the image registry, use secure networking protocols, monitor application activity, and much more.

P.S. Kubescape

In August’21, the National Security Agency and the Cybersecurity and Infrastructure Security Agency published a joint report, Kubernetes Hardening Guidance.

This report attracted a great deal of interest from the Kubernetes community. As a result, the first tool to test K8s installations for compliance with the requirements in this document has already become available. It’s called kubescape and has over 5000 GitHub stars as of the time of this publishing. You’d likely want to try it out too:

In the test output, some tests indicate [PASSED] and (Manual). What does that mean? Are manual steps needed even if these tests pass?